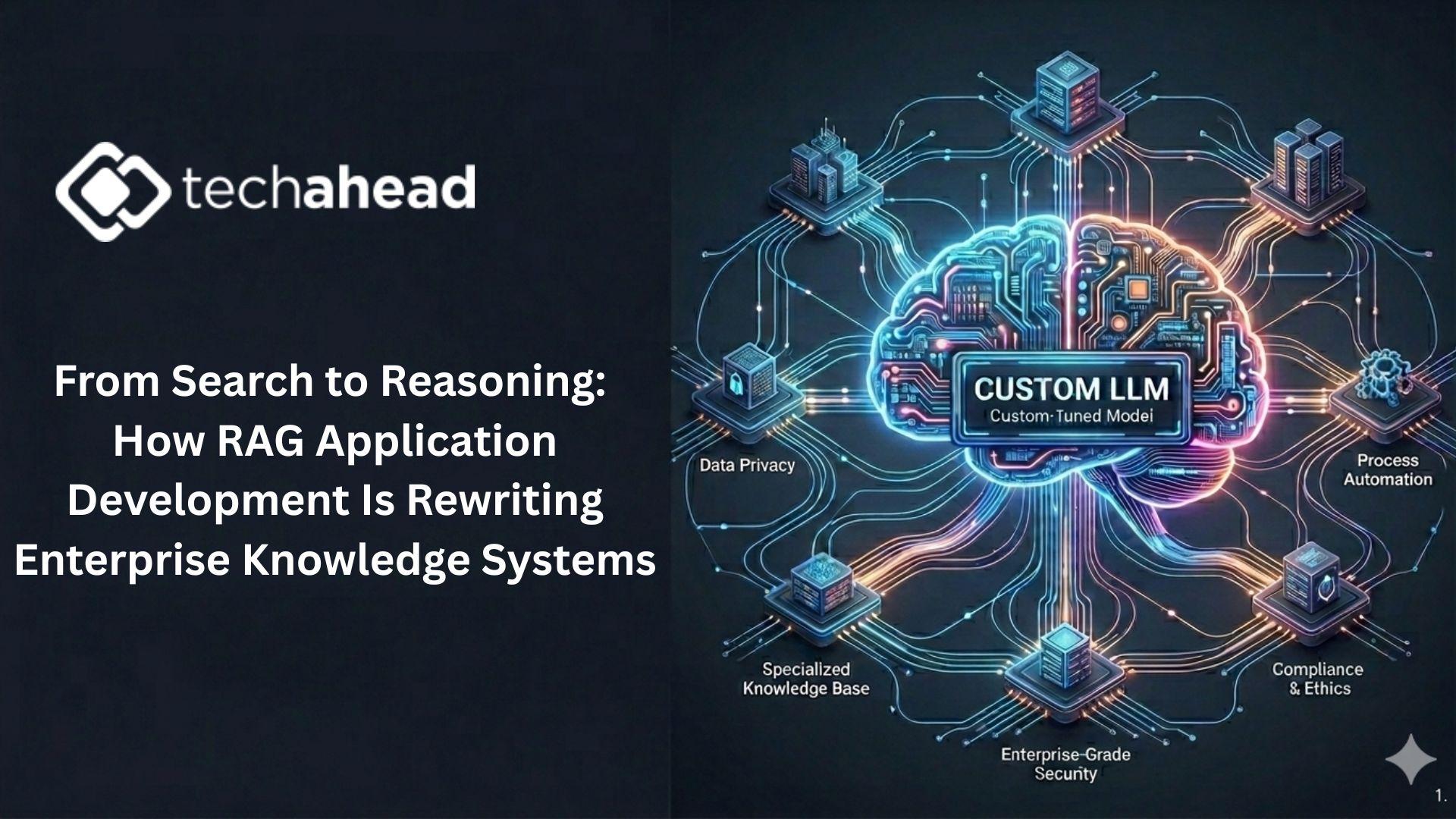

From Search to Reasoning: How RAG Application Development Is Rewriting Enterprise Knowledge Systems

Enterprises today are drowning in information but starving for insight. Every organization has terabytes of internal documentation—policies, contracts, emails, product manuals, compliance reports, research briefs, and customer conversations. Yet employees still struggle to find precise answers when they need them.

The problem isn’t lack of data. It’s the inability to transform fragmented information into contextual intelligence.

This is where Custom LLM Solutions are changing the equation. Instead of relying on generic AI models that provide broad answers, enterprises are engineering systems that understand their internal language, workflows, and regulatory landscape. When combined with advanced RAG Application Development, these systems don’t just retrieve documents—they reason across them.

In 2026, the organizations that master intelligent retrieval are the ones that move fastest.

Why Traditional Enterprise Search Failed

For decades, enterprise knowledge management relied on keyword search and hierarchical document storage. The logic was simple: organize files, index them, and let users search.

But traditional systems struggle with real-world complexity:

-

Employees don’t know the exact keywords to use

-

Context is fragmented across multiple documents

-

Policies are updated but old versions remain searchable

-

Different departments use different terminology

Imagine a compliance officer asking:

“What new regulatory risks apply to cross-border data transfers under our current vendor agreements?”

A keyword search might surface dozens of unrelated documents. But a reasoning-based system powered by Custom LLM Solutions and robust RAG Application Development can synthesize the relevant policy updates, vendor contracts, and jurisdictional rules into a coherent, actionable answer.

That shift—from retrieval to reasoning—is transformative.

The Architecture Behind Modern Intelligent Knowledge Systems

To understand why this works, we need to examine how these systems are built.

1. Semantic Embeddings Instead of Keywords

Documents are converted into vector embeddings that represent meaning rather than exact word matches. This allows systems to identify semantically similar content even when phrasing differs.

2. Contextual Retrieval Pipelines

Advanced RAG Application Development uses multi-stage retrieval. First, it narrows the search space. Then, it ranks results based on contextual intent. Some systems also apply metadata filters such as document recency, department ownership, or confidentiality level.

3. Domain-Specific Model Alignment

Generic models may misunderstand industry terminology. Custom LLM Solutions are trained or fine-tuned to interpret enterprise-specific vocabulary, compliance language, and operational nuance.

4. Grounded Response Generation

Instead of generating answers from memory, the model references retrieved documents and produces responses grounded in verifiable sources. Many systems now include inline citations for auditability.

This architecture turns static knowledge repositories into interactive intelligence layers.

Measurable Business Impact

Forward-thinking enterprises are already seeing tangible outcomes.

Reduced Internal Search Time

Organizations report up to 50% reductions in time spent searching for documentation. Knowledge workers can focus on decision-making instead of navigation.

Faster Onboarding

New employees can ask contextual questions rather than reading hundreds of pages of documentation. AI becomes a real-time mentor.

Improved Compliance Confidence

Because responses are grounded in up-to-date documents via RAG Application Development, organizations reduce the risk of outdated policy reliance.

Better Cross-Department Collaboration

Custom systems break down silos by interpreting information across departments, even when terminology differs.

The ROI is not theoretical—it’s operational.

Hallucination Mitigation Through Retrieval Grounding

One of the biggest criticisms of generative AI has been hallucination. When models lack specific knowledge, they generate plausible but incorrect responses.

This is unacceptable in industries like finance, healthcare, and law.

The combination of Custom LLM Solutions with structured RAG Application Development directly addresses this risk by:

-

Restricting responses to approved internal data sources

-

Enforcing answer constraints

-

Providing transparent source references

-

Allowing confidence scoring

By grounding AI in authoritative enterprise knowledge, organizations dramatically increase reliability.

Beyond Q&A: The Rise of Knowledge Agents

The most advanced systems in 2026 go far beyond answering questions.

They proactively:

-

Monitor regulatory updates

-

Flag inconsistencies between policy documents

-

Alert teams to outdated procedures

-

Suggest process optimizations

Imagine a system detecting that a vendor contract conflicts with a recently updated compliance policy. Instead of waiting for a human to discover the issue, it surfaces the inconsistency automatically.

This shift—from reactive retrieval to proactive insight—is powered by deeper integration of Custom LLM Solutions and evolving RAG Application Development frameworks.

Security and Governance at the Core

Enterprise knowledge systems must operate within strict security boundaries. Modern implementations include:

-

Role-based access controls

-

Encrypted embedding storage

-

Query logging for audits

-

Data residency enforcement

Because retrieval pipelines control what information the model can access, RAG Application Development adds an additional security layer compared to open generative systems.

When paired with policy-aware Custom LLM Solutions, enterprises gain AI systems that are not only intelligent but compliant by design.

The Competitive Advantage of Intelligent Knowledge

Knowledge speed is competitive speed.

Companies that can instantly synthesize internal research, operational data, and compliance updates make faster decisions. They launch products quicker. They respond to market shifts more effectively.

In contrast, organizations relying on outdated search systems experience decision lag.

In 2026, intelligent knowledge infrastructure is no longer optional—it’s strategic.

Conclusion: From Documents to Dynamic Intelligence

Enterprise knowledge has evolved from static repositories to dynamic reasoning systems.

Through Custom LLM Solutions, organizations embed domain intelligence into AI systems tailored to their operational DNA. Through advanced RAG Application Development, they ensure that this intelligence remains grounded, verifiable, and adaptable.

The companies that succeed in the coming decade will not be those with the most data—but those with the most intelligent access to it.

The future of enterprise knowledge is not storage. It is synthesis.