The Architectural Blueprint of the Modern Data Governance Market Platform

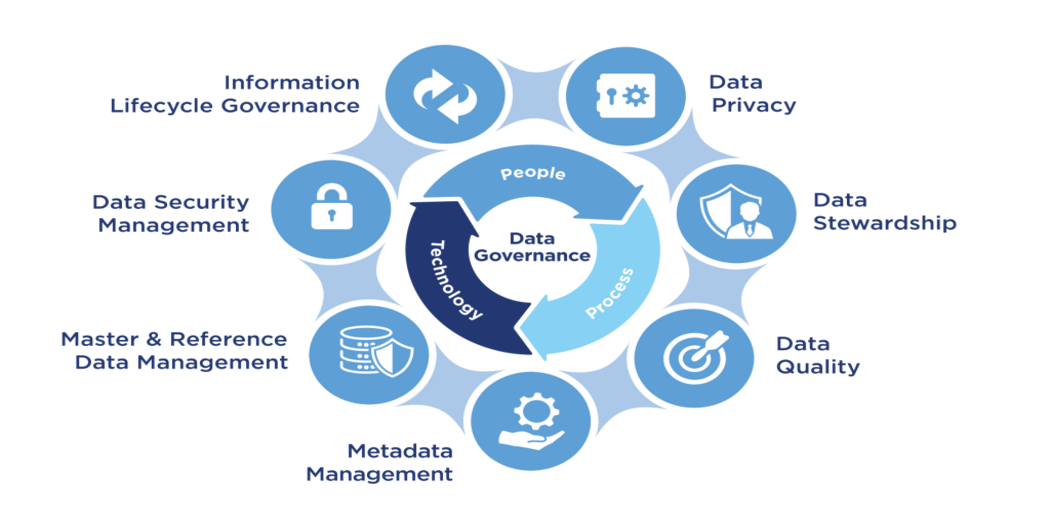

A modern Data Governance Market Platform is a sophisticated, integrated software suite designed to operationalize and automate the principles of data governance across a complex enterprise environment. It is far more than a single tool; it is a comprehensive ecosystem of interconnected modules that work in concert to manage the entire data lifecycle. The primary goal of such a platform is to provide a central hub for all governance-related activities, fostering collaboration between business users, data stewards, and IT professionals. The architecture of these platforms typically revolves around a core metadata repository, which acts as the system of record for all information about the organization's data. This central brain ingests and synthesizes metadata from a vast array of source systems, creating a rich, contextualized understanding of the entire data landscape. The platform then provides a suite of tools that leverage this metadata to enable key governance functions, transforming abstract policies into tangible, automated controls and workflows, and making governance a continuous, active process rather than a static, manual one.

At the heart of virtually every modern data governance platform is the Data Catalog. This module serves as a searchable inventory or "Google for the enterprise's data," allowing users of all skill levels to easily discover, understand, and evaluate data assets. The catalog automatically connects to various data sources—databases, data lakes, BI tools, and SaaS applications—and harvests their technical metadata (e.g., table names, column definitions, data types). It then enriches this technical metadata with business context. Data stewards can add business-friendly descriptions, assign ownership, apply data classification tags (like "PII" or "Confidential"), and link data assets to specific business terms defined in a glossary. The catalog also provides collaboration features, allowing users to rate datasets, add comments, and ask questions of data owners. By providing a single, unified portal for exploring all available data, the data catalog breaks down data silos, reduces the time analysts spend searching for data, and builds a foundational layer of trust by clarifying the meaning and context of data assets.

Another critical component of a data governance platform is its Data Quality Management capabilities. A governance program is meaningless if the data it governs is inaccurate or inconsistent. This module provides a suite of tools to profile, cleanse, monitor, and remediate data quality issues. Data profiling tools automatically scan datasets to discover their statistical characteristics and identify potential anomalies, such as null values, incorrect formats, or outlier data points. Based on these profiles, data stewards can define and apply data quality rules (e.g., "A customer's email address must contain an '@' symbol"). The platform then continuously monitors the data against these rules, generating data quality scores and creating dashboards that provide a clear view of the overall health of key data assets. When issues are detected, the platform's workflow engine can automatically route them to the responsible data steward for investigation and remediation. This systematic approach to data quality ensures that the organization is making decisions based on reliable and trustworthy information.

Tying everything together are the platform's Policy Management and Data Lineage functionalities. The policy management module provides a central repository for defining, documenting, and enforcing the rules that govern data. This includes policies for data access, data retention, data sharing, and compliance. The platform can often automate the enforcement of these policies by integrating with underlying data platforms. For example, a policy stating that only members of the HR department can access employee salary data can be automatically translated into an access control rule in the data warehouse. Complementing this is the data lineage module, which provides a visual, end-to-end map of the data's journey. It shows where a piece of data originated, what transformations it has undergone, and where it is used in reports and dashboards. This "breadcrumb trail" is invaluable for performing impact analysis (e.g., "If we change this source system column, what reports will be affected?") and for providing auditors with the proof of data provenance required for regulatory compliance, making it an essential tool for both operational efficiency and risk management.

Top Performing Market Insight Reports:

Brain Computer Interface Market